View URLs blocked by robots.txt, meta robots or X-Robots-Tag directives such as ‘noindex’ or ‘nofollow’, as well as canonicals and rel=“next” and rel=“prev”.Ĭonnect to the Google Analytics, Search Console and PageSpeed Insights APIs and fetch user and performance data for all URLs in a crawl for greater insight.Įvaluate internal linking and URL structure using interactive crawl and directory force-directed diagrams and tree graph site visualisations.

Render web pages using the integrated Chromium WRS to crawl dynamic, jаvascript rich websites and frameworks, such as Angular, React and Vue.js.įind temporary and permanent redirects, identify redirect chains and loops, or upload a list of URLs to audit in a site migration.ĭiscover exact and near duplicate content, duplicated elements such as page titles, descriptions or headings, and find low content pages. Quickly create XML Sitemaps and Image XML Sitemaps, with advanced configuration over URLs to include, last modified, priority and change frequency. This might include social meta tags, additional headings, prices, SKUs or more! Bulk export the errors and source URLs to fix, or send to a developer.Īnalyse page titles and meta descriptions during a crawl and identify those that are too long, short, missing, or duplicated across your site.Ĭollect any data from the HTML of a web page using CSS Path, XPath or regex. It gathers key onsite data to allow SEOs to make informed decisions.Ĭrawl a website instantly and find broken links (404s) and server errors. The SEO Spider is a powerful and flexible site crawler, able to crawl both small and very large websites efficiently, while allowing you to analyse the results in real-time. What can you do with the SEO Spider Tool? Download & crawl 500 URLs for free, or buy a licence to remove the limit & access advanced features. X-Pantheon-Styx-Hostname: Screaming Frog SEO Spider is a website crawler that helps you improve onsite SEO, by extracting data & auditing for common SEO issues. When I fetch my website page using GWT this is what I receive. Give it a few minutes and see if any of the above suggestions work. It could just be a slow connection on your end. Click the checkbox and try running it again.

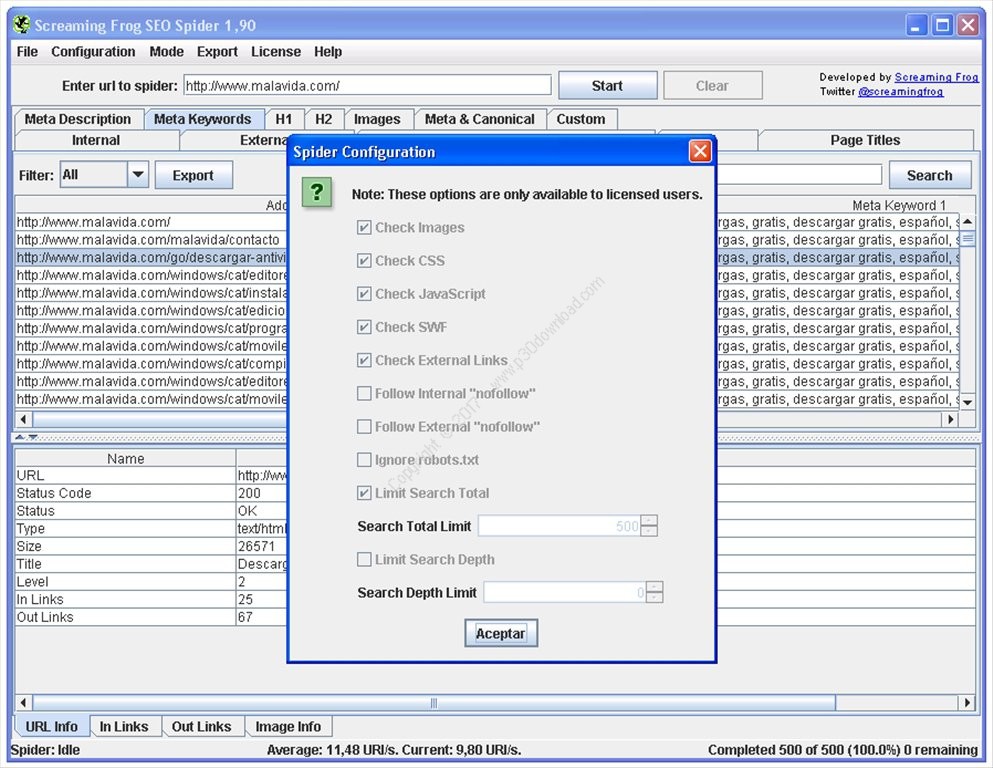

One thing you can try, is goto Configuration > Spider and then goto the last option Ignore robots.txt. Run through your settings and check and see if you may have turned something on inadvertently that you didn't mean to. This is shown in the Content column and should be either text/html or application/xhtml+xml. The Content-Type header did not indicate the page is html.The SEO Spider does not crawl the frame src attribute. These can be seen in the “Directives” tab in the “Nofollow” filter. The could be set by either a meta robots tag or an X-Robots-Tag in the HTTP header. The page has a page level ‘nofollow’ attribute.There is an option in Configuration->Spider under the “Basic” tab to follow ‘nofollow’ links. The ‘nofollow’ attribute is present on links not being crawled.Can you view the site with cookies disabled in your browser? Licenced users can enable cookies by going to Configuration->Spider and ticking “Allow Cookies” in the “Advanced” tab. Try looking at the site in your browser with JavaScript disabled. Try changing the User Agent under Configuration->User Agent. Compare Free SEO Checker by Spotibo VS Screaming Frog SEO Spider and see what are their differences. The site behaves differently depending on User Agent. Categories Featured About Register Login Submit a product.You can configure the SEO Spider to ignore robots.txt by going to the “Basic” tab under Configuration->Spider. A count of pages blocked by robots.txt is shown in the crawl overview pane on top right hand site of the user interface. Here are a list of reasons why ScreamingFrog won't crawl your site:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed